Many long-established clients accumulate bloat over time. This often occurs due to the need to support legacy features for existing users or through attempts to implement overly ambitious software. The result is often complex, difficult-to-maintain, and error-prone systems.

In contrast, our philosophy is rooted in simplicity. We strive to write minimal code, prioritize clarity, and embrace simplicity in design. We believe this approach is the best way to build a client that is both fast and resilient. By adhering to these principles, we will be able to iterate fast and explore next-generation features early, either from the Ethereum roadmap or from innovations from the L2s.

Read more about our engineering philosophy here

- Ensure effortless setup and execution across all target environments.

- Be vertically integrated. Have the minimal amount of dependencies.

- Be structured in a way that makes it easy to build on top of it, i.e rollups, vms, etc.

- Have a simple type system. Avoid having generics leaking all over the codebase.

- Have few abstractions. Do not generalize until you absolutely need it. Repeating code two or three times can be fine.

- Prioritize code readability and maintainability over premature optimizations.

- Avoid concurrency split all over the codebase. Concurrency adds complexity. Only use where strictly necessary.

make localnetThis make target will:

- Build our node inside a docker image.

- Fetch our fork ethereum package, a private testnet on which multiple ethereum clients can interact.

- Start the localnet with kurtosis.

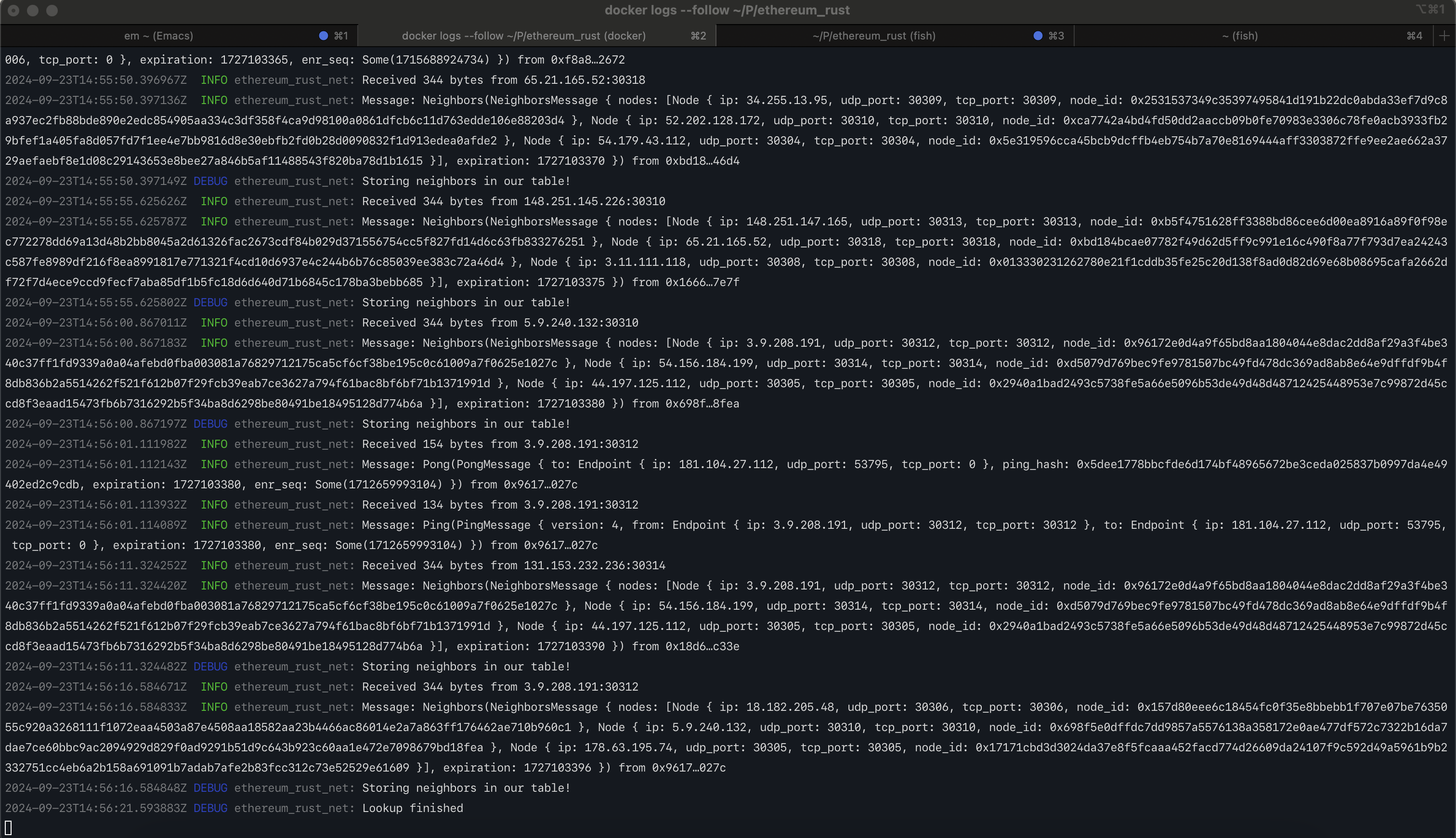

If everything went well, you should be faced with our client's logs (ctrl-c to leave)

To stop everything, simply run:

make stop-localnetTo build the node, you will need the rust toolchain:

- First, install asdf:

- Add the rust plugin:

asdf plugin-add rust https://github.com/asdf-community/asdf-rust.git- cd into the project and run:

asdf installYou now should be able to build the client:

make buildCurrently, the database is libmdbx, it will be set up

when you start the client. The location of the db's files will depend on your OS:

- Mac:

~/Library/Application Support/ethereum_rust - Linux:

~/.config/ethereum_rust

You can delete the db with:

cargo run --bin ethereum_rust -- removedbFor testing, we're using three kinds of tests.

These are the official execution spec tests, you can execute them with:

make testThis will download the test cases from the official execution spec tests repo and run them with our glue code

under cmd/ef_tests/tests.

The second kind are each crate's tests, you can run them like this:

make test CRATE=<crate>For example:

make test CRATE="ethereum_rust-blockchain"Finally, we have End-to-End tests with hive. Hive is a system which simply sends RPC commands to our node, and expects a certain response. You can read more about it here. Hive tests are categorized by "simulations', and test instances can be filtered with a regex:

make run-hive-debug SIMULATION=<simulation> TEST_PATTERN=<test-regex>This is an example of a Hive simulation called ethereum/rpc-compat, which will specificaly

run chain id and transaction by hash rpc tests:

make run-hive SIMULATION=ethereum/rpc-compat TEST_PATTERN="/eth_chainId|eth_getTransactionByHash"If you want debug output from hive, use the run-hive-debug instead:

make run-hive-debug SIMULATION=ethereum/rpc-compat TEST_PATTERN="*"This example runs every test under rpc, with debug output

Example run:

cargo run --bin ethereum_rust -- --network test_data/genesis-kurtosis.jsonThe network argument is mandatory, as it defines the parameters of the chain.

For more information about the different cli arguments check out the next section.

Ethereum Rust supports the following command line arguments:

--network <FILE>: Receives aGenesisstruct in json format. This is the only argument which is required. You can look at some example genesis files attest_data/genesis*.--datadir <DIRECTORY>: Receives the name of the directory where the Database is located.--import <FILE>: Receives an rlp encodedChainobject (aka a list ofBlocks). You can look at the example chain file attest_data/chain.rlp.--http.addr <ADDRESS>: Listening address for the http rpc server. Default value: localhost.--http.port <PORT>: Listening port for the http rpc server. Default value: 8545.--authrpc.addr <ADDRESS>: Listening address for the authenticated rpc server. Default value: localhost.--authrpc.port <PORT>: Listening port for the authenticated rpc server. Default value: 8551.--authrpc.jwtsecret <FILE>: Receives the jwt secret used for authenticated rpc requests. Default value: jwt.hex.--p2p.addr <ADDRESS>: Default value: 0.0.0.0.--p2p.port <PORT>: Default value: 30303.--discovery.addr <ADDRESS>: UDP address for P2P discovery. Default value: 0.0.0.0.--discovery.port <PORT>: UDP port for P2P discovery. Default value: 30303.--bootnodes <BOOTNODE_LIST>: Comma separated enode URLs for P2P discovery bootstrap.--log-level <LOG_LEVEL>: The verbosity level used for logs. Default value: info. possible values: info, debug, trace, warn, error

Add support to follow a post-Merge localnet as a read-only RPC Node. This first milestone will only support a canonical chain (every incoming block has to be the child of the current head).

RPC endpoints

debug_getRawBlock✅debug_getRawHeader✅debug_getRawReceipts✅debug_getRawTransaction✅engine_exchangeCapabilitiesengine_exchangeTransitionConfiguration✅engine_newPayload✅eth_blobBaseFee✅eth_blockNumber✅eth_call(at head block) ✅eth_chainId✅eth_createAccessList(at head block) ✅eth_estimateGas✅eth_feeHistoryeth_getBalance(at head block) ✅eth_getBlockByHash✅eth_getBlockByNumber✅eth_getBlockReceipts✅eth_getBlockTransactionCountByNumber✅eth_getCode(at head block) ✅eth_getFilterChangeseth_getFilterLogseth_getLogseth_getStorageAt(at head block) ✅eth_getTransactionByBlockHashAndIndex✅eth_getTransactionByBlockNumberAndIndex✅eth_getTransactionByHash✅eth_getTransactionCount✅eth_newBlockFiltereth_newFiltereth_newPendingTransactionFiltereth_uninstallFilter

See issues and progress: https://github.com/lambdaclass/ethereum_rust/milestone/1

Implement support for block reorganizations and historical state queries. This milestone involves persisting the state trie to enable efficient access to historical states and implementing a tree structure for the blockchain to manage multiple chain branches.

RPC endpoints

engine_forkchoiceUpdated(withoutpayloadAttributes)eth_call(at any block) ✅eth_createAccessList(at any block) ✅eth_getBalance(at any block) ✅eth_getCode(at any block) ✅eth_getProof✅eth_getStorageAt(at any block) ✅

See issues and progress: https://github.com/lambdaclass/ethereum_rust/milestone/4

Add the ability to build new payloads, so that the consensus client can propose new blocks based on transactions received from the RPC endpoints.

RPC endpoints

engine_forkchoiceUpdated(withpayloadAttributes)engine_getPayloadeth_sendRawTransaction✅

Implement DevP2P protocol, including RLPx p2p and eth features. This will let us get and send blocks and transactions from other nodes. We'll add the transactions we receive to the mempool. We'll also download blocks from other nodes when we get payloads where the parent isn't in our local chain.

RPC endpoints

admin_nodeInfo✅

See issues and progress: https://github.com/lambdaclass/ethereum_rust/milestone/2

Add snap sync protocol, which lets us get a recent copy of the blockchain state instead of going through all blocks from genesis. Since we don't support older versions of the spec by design, this is a prerequisite to being able to sync the node with public networks, including mainnet.

RPC endpoints

eth_syncing

See issues and progress: https://github.com/lambdaclass/ethereum_rust/milestone/3

Ethereum Rust L2 is a feature allowing you to run Ethereum Rust as a ZK-Rollup. The node has the same interface as regular Ethereum Rust, with the addition that blocks execution is proven and the proof is sent to an L1 network for verification, thus inheriting the L1's security.

The main differences with regular Ethereum Rust are:

- There is no consensus, only one sequencer proposes blocks for the network.

- Block execution is proven using a RISC-V zkVM and its proofs are sent to L1 for verification.

- A set of Solidity contracts to be deployed to the L1 are included as part of network initialization.

- Two new types of transactions are included

Users can deposit Eth in the L1 (Ethereum) and receive the corresponding funds on the L2.

| Name | Description | Status | |

|---|---|---|---|

| Contracts | CommonBridge |

Deposit method implementation | 🏗️ |

BlockExecutor |

Commit and verify methods (placeholders for this stage) | ✅ | |

| Operator | Sequencer |

Proposes new blocks to be executed | ✅ |

L1Watcher |

Listens for and handles L1 deposits | ✅ | |

L1TxSender |

commits new block proposals and sends block execution proofs to be verified | 🏗️ | |

| Deposit transactions handling | new transaction type for minting funds corresponding to deposits | 🏗️ |

The network supports basic L2 functionality, allowing users to deposit and withdraw funds to join and exit the network, while also interacting with the network as they do normally on the Ethereum network (deploying contracts, sending transactions, etc).

| Name | Description | Status | |

|---|---|---|---|

| Contracts | CommonBridge |

Withdraw method implementation | ❌ |

BlockExecutor |

Commit and verify implementation | ❌ | |

Verifier |

Use Solidity verifier | ❌ | |

| Operator | ProofDataProvider |

Feeds the ProverDataClient with block data to be proven and delivers proofs to the L1TxSender for L1 verification |

🏗️ |

| Withdraw transactions handling | New transaction type for burning funds on L2 and unlock funds on L1 | ❌ | |

| Prover | ProofDataClient |

Asks for block execution data to prove, generates proofs of execution and submits proofs to the ProofDataProvider |

🏗️ |

- Use Blobs (EIP 4844) for data availability on L1 instead of calldata and send state diffs instead of the entire state.

- Support native account abstraction on the L2.

- Base token, common bridge for multiple L2s

- Validium support (i.e. other data availability solutions instead of Ethereum).

In the next sections, you can dive further into the code internals.